Intro

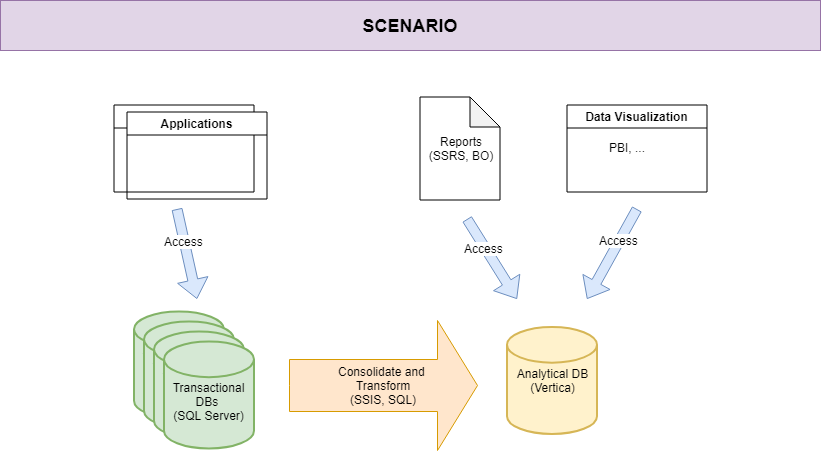

In the previous post we’ve described the idea behind the automation we’re trying to implement on a scenario based on MicroFocus Vertica database.

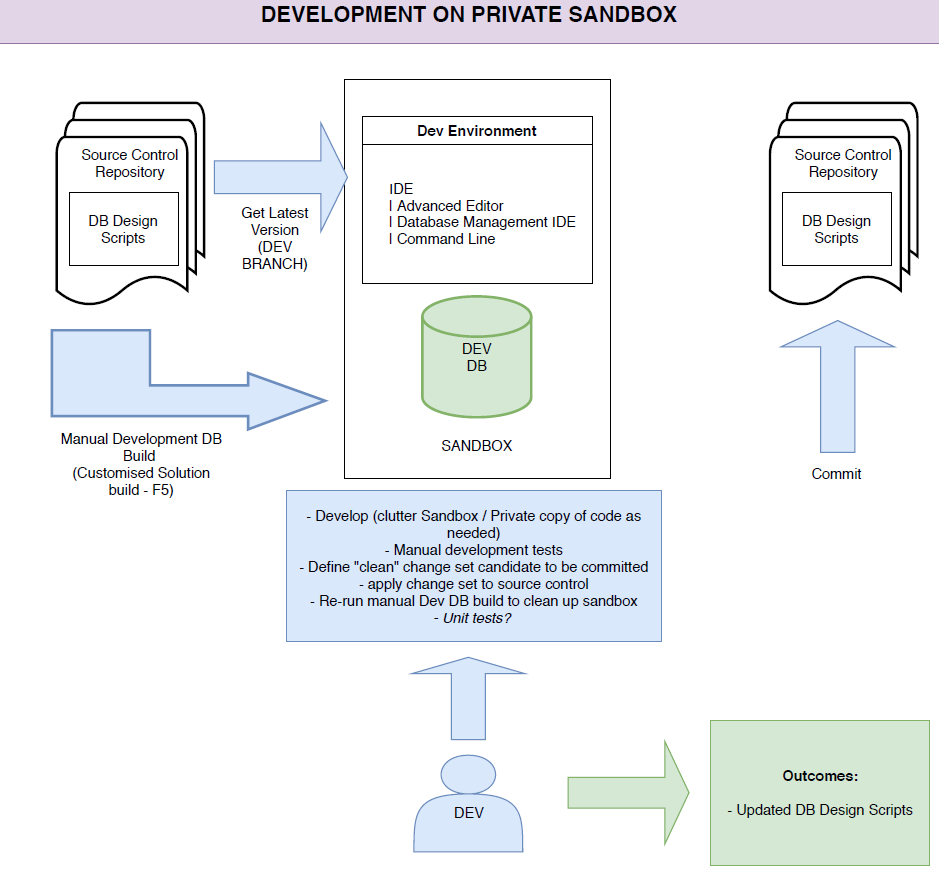

How it works

This “sandbox” is not a real isolated development workstation. Let’s separate it into two parts, the first one for the development on everything but Vertica (Windows local workstation) and the other one for a Vertica instance (probably Unix/Linux VM) shared between developers.

In this shared instance we will get a schema for each developer is working on the solution, in order to let everyone to get his own “environment”.

The source control folder tree (which will be TFVC source control on-premises) will be designed on the desired branch as the following:

/Project

/Instance

/Process1

/_Master

schema.ps1

tables.ps1

views.ps1

/Tables

Table1.sql

Table2.sql

/Views

View1.sql

View2.sql

Schema.sql

/Process2

/Tables ...

/Views ...

As you ca see, under the Project folder there is the Vertica database folder, which contains, schema by schema, all the .sql files for Tables and Views DDLs (CREATE TABLE and CREATE VIEW). You can notice also .ps1 files, which contains the list of executions based on a certain order (business driven).

The file for a, let’s say, “Table1”, can be like this one:

CREATE TABLE :SCHEMA.Table1

(

RowId int NOT NULL,

RowStringValue varchar(30) NULL,

CONSTRAINT PK_<schema>Table1 PRIMARY KEY (RowId)

);

We’ve added a :SCHEMA parameter, which allows each developer to create its own schema as described before. This is the only way we’ve found for isolating developers in a Vertica shared instance, avoiding an instance for each developer, which could be resource intensive for available PCs. Running the application locally, before committing any change set to the Source Control, a simple tool will execute .sql files with the new schema name and in the sort order given by the .ps1 file.

The Tables.ps1 file can be as the following:

param( [parameter(Mandatory=$true)]$hostname, [parameter(Mandatory=$true)]$port, [parameter(Mandatory=$true)]$user, [parameter(Mandatory=$true)]$psw, [parameter(Mandatory=$true)]$schemaName, [parameter(Mandatory=$true)]$scriptsFolder ) $schemaCommand = "vsql -h $hostname -p $port -U $user -w $psw -v SCHEMA=$schemaName -f $(Join-Path $scriptsFolder "Table1.sql")" Invoke-Expression -command '$schemaCommand' $schemaCommand = "vsql -h $hostname -p $port -U $user -w $psw -v SCHEMA=$schemaName -f $(Join-Path $scriptsFolder "Table2.sql")" Invoke-Expression -command '$schemaCommand'

You may notice the term “vsql”, which is the command line provided by Vertica for executing queries. Further information here.

Also, usernames and passwords will be stored in an external config file (or a secured API), like the following one:

{

"host": "MyHost.Hos.Ext",

"port": 1234,

"user": "user",

"psw": "password",

"schemaName": "MYSCHEMA"

}

We’ve got the DDLs, the PoSh files for executing them and the Vertica command line. Good, in a development environment, however, a set of tools should be prepared for helping us to keep these artifacts on a single pipeline, too. This is the reason why we’ve created a “builder” script, like this one:

$config = Get-Content (Join-Path $currentFolder "Build-Config.json") | Out-String | ConvertFrom-Json $schemaCommand = $(Join-Path $scriptsFolder "Tables.ps1") $schemaCommand += " -hostname $($config.host)" $schemaCommand += " -user $($config.user)" $schemaCommand += " -port $($config.port)" $schemaCommand += " -psw '$($config.psw)'" $schemaCommand += " -schemaName $($config.schemaName)" $schemaCommand += " -scriptsFolder $scriptsFolder" Invoke-Expression -command $schemaCommand

This is another layer of management, which allows us to organize every part of the DDLs to be executed against Vertica.

Note: Our scripts will destroy and rebuild any given SCHEMA. But this is the way we like.

Now, let’s see the possible scenarios.

Start from scratch or get started

When someone wants to start from scratch, this is the pipeline to follow:

- get latest version of the branch;

- check and change the configuration file (JSON);

- execute the create-vertica-database-from-scratch.bat file (it contains our powershell “build” script);

- that’s it, we’ve got a new schema in Vertica, empty and ready to be consumed.

If you want to preserve your data, this is not the right path for you. Executing the “builder” tool is optional.

New development

When a developer would make and try its changeset:

- change Visual Studio application (SSIS or SSRS here) when needed;

- change the Vertica schema (adding tables, columns and so on);

- get the .sql file of a new object or change the .sql file of an object which has been updated;

- replace them into the TFVC file structure;

- change the .ps1/.txt files if any DDL has been added (or if something that impacts on the order occurs);

- build the Visual Studio application and try it;

- When everything works good, check-in.

Now, everyone can get the latest changes in a CI way.

Get delta changes

When a developer is going to get the latest changes that contains an updated version of the Vertica objects, but wants to preserve its data, this is a little bit more tricky. The person who has made the change could share in a collaborative chat tool the ALTER script. This is not so reliable and comfortable, but without any comparison tool, there isn’t any best way to make this happen.

That being said, we’ve implemented our diff-script generator, based on the analysis of Vertica metadata (the catalog, browsing v_internal objects). So, after our friend gets the latest version, he executes a generate-diff-script.bat tool and lets this script to execute the generated ALTER script. Tricky, but it works like a charm (we will speak about this comparison tool in next posts, maybe) . Anyway, we’re looking forward hearing updates from MicroFocus. Hopefully, we’ll get an official diff tool from them soon!

Conclusions

I’ve just shown the way we’re managing tables DDLs and how we’ve created PowerShell scripts, but the real scenario is more complex. We have to drop Vertica schema (CASCADE DROP) before, then re-creating the new parametrized schema, then tables, then views and so on. Sometimes, we’ve got Vertica schemas which are referenced each other, so we have to create for everyone of them tables before, then views. The business logic is managed by how we write the “build” PowerShell script as well as the automated build process will.

Also the build process is not always “straight”. Some of the business processes need to be managed in a dedicated way. Cross reference will occur but this is the job that the “builder” will do. Both for the manual and the automated one. Moving responsibility to the build process allows us to feel more comfortable about our development solution.

Last, but not least, the manual-development-build process allows the developer to choose between re-create the database or not. They should not waste time in managing the things that a script can do repeatedly and efficiently. This is the reason why we kept somehow static the PowerShell instead of writing complicated business logic. Just adding rows of vsql invocation in the right order, that’s it.

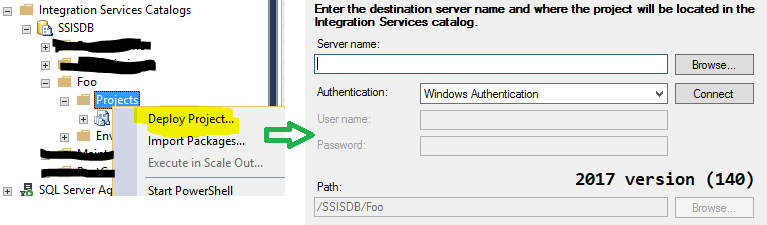

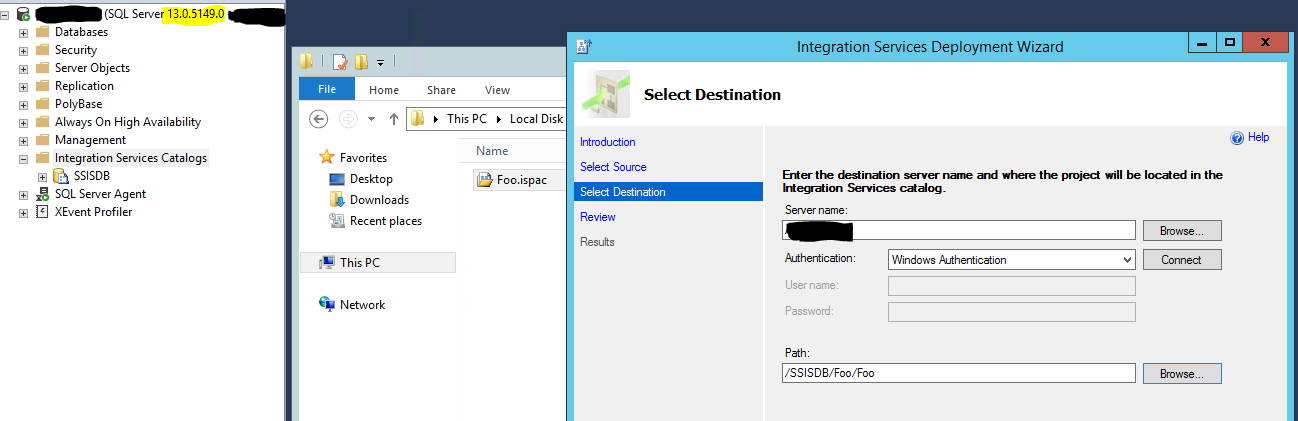

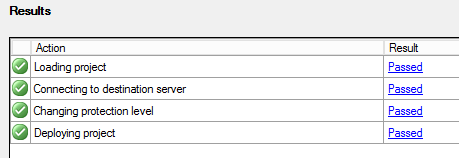

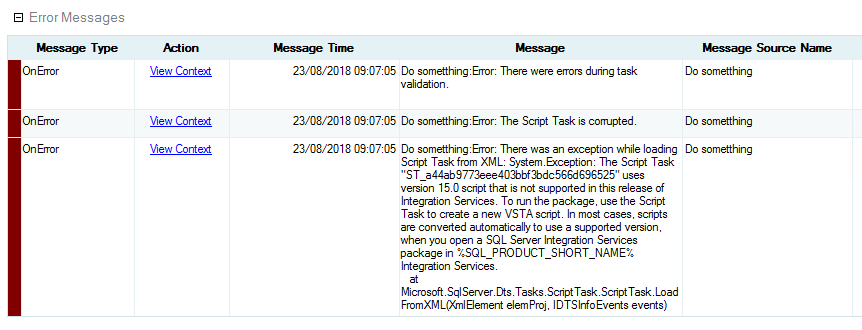

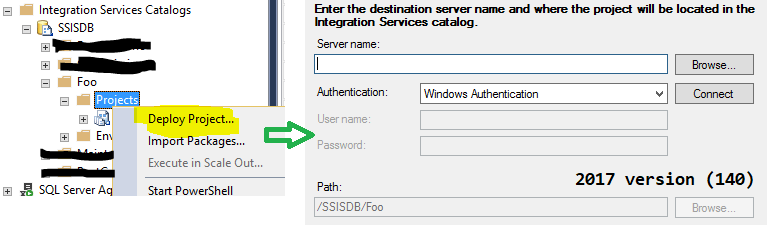

As Andy suggested us, I’ve changed the TargetServerVersion to SQL Server 2016, so I’ve got C# 2015 compiler for scripts:

As Andy suggested us, I’ve changed the TargetServerVersion to SQL Server 2016, so I’ve got C# 2015 compiler for scripts: